Current Research

Active Vision Based Minimalist Quadrotor Design

Our goal is to develop a micro-quadrotor that can fly autonomously with on-board computation and sensing using only cameras and IMU. The algorithms developed for navigation are active, task driven and mimic the methodologies utilized by insects and birds. The general concept of Active Vision is to move in such a way to make the perception problem easier. We work on designing both these “movement” and “perception” algorithms.

3D Perception and Segmentation

Object segmentation in unknown, cluttered scenes is a fundamental problem that robots able to interact with their environment have to solve. We are developing segmentation techniques for both static scenes, where we leverage the symmetry shape prior to obtain object segments from partial 3D reconstructions of cluttered environments, and dynamic scenes of a human performing an action, where we jointly segment and track the manipulated objects using a contact-based active approach that relies on dense point cloud model tracking.

Event based cameras record only pixel changes instead of recording frames like a traditional camera. This makes the sensor highly suited for high speed, dynamic and high dynamic range scenarios often encountered in autonomous robot navigation. We work on the fundamental problems of odometry/ego-motion, motion segmentation and SLAM using event based sensors.

Researchers: Anton Mitrokhin and Chethan M. Parameshwara

Vision, Language and Cognition

Computer Vision and Natural Language Processing are two modalities which are generally seen as applications of Machine Learning. We focus on the mathematical modeling of these modalities in a common space so that one can learn higher level semantics from language and use these concepts to accomplish general intelligence or common sense reasoning to computer vision.

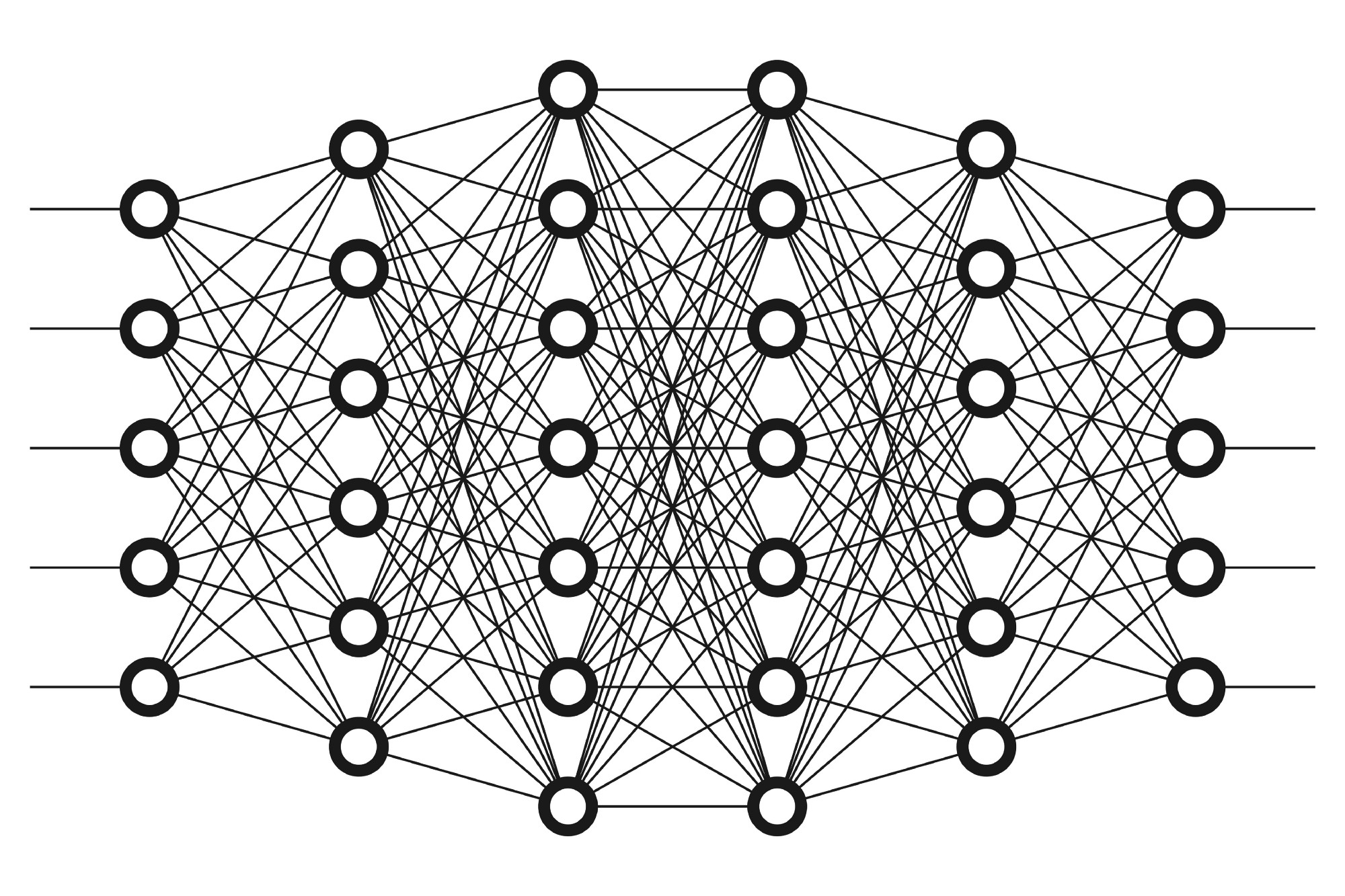

Deep Learning

Though Deep Learning has gained a lot of popularity lately, most of the work is application driven without much mathematical intuition. We work on making better and more efficient Deep Learning architectures and learning algorithms. We then apply these methods to make computer vision and navigation algorithms faster and more robust.

Human Robot Interaction

Robots in everyday lives are only possible when robots can interact with humans naturally and it takes little or no effort for humans to learn to use the robots. Our goal is to try to build robot systems so that robots and humans can interact with each other naturally like humans do amongst ourselves in collaboratively accomplishing tasks more efficiently, without being annoying.

Cognition with Simulated Neurons

We believe that vision and motion are dynamic, intertwined processes that unfold in time, and that the animal brain has adapted specifically to act as a dynamical system which processes and learns to achieve goals through neural plasticity on temporal data. This work straddles the line between cognitive neuroscience and A.I. by trying to reverse-engineer the current understanding of how the brain works as a dynamical system, We aim to provide novel robotic algorithms as well as a platform to test neuro-cognitive theories. Our hope is that these neuro-dynamical systems approaches will give next-generation robots the ability to robustly react to new and unforeseen experiences in the real world, one of the problems that has prevented robotics from becoming ubiquitous in society.